I have written a CLI utility for Ubuntu to import ModSecurity's audit log file into an sqlite database, which should be a great help to people building whitelists to reduce false positives. This supersedes my previous efforts with BASH scripts. Packages are available for Ubuntu Trusty and Utopic (14.04 & 14.10) in my Personal Package Archive on Launchpad. To create my app I had to learn about:

I have written a CLI utility for Ubuntu to import ModSecurity's audit log file into an sqlite database, which should be a great help to people building whitelists to reduce false positives. This supersedes my previous efforts with BASH scripts. Packages are available for Ubuntu Trusty and Utopic (14.04 & 14.10) in my Personal Package Archive on Launchpad. To create my app I had to learn about:

- C++ development on Ubuntu including two third party libraries (Boost Regex and SQLite)

- Version control using Git

- The GNU build system "Autotools"

- How to build .deb packages for Ubuntu and Debian

- How to upload packages to a Personal Package Archive (PPA) on Launchpad

I plan on writing detailed tutorials for most of this, but there's quite a lot to get through so it could take a while!

What is ModSecurity

If you have read my previous posts on Apache2's security module "ModSecurity" then you may already know what it is. For those of you who haven't used it, ModSecurity is a Web Application Firewall that can be used with a set of rules to "enumerate badness" and decide when to block requests sent to the server. It sits inbetween Apache and the web applications running on the server, and can therefore intercept malicious requests before they are processed by the app. Probably the most common set of rules is the Open Web Application Security Project's Core Rule Set (OWASP CRS), which is in the ubuntu repos as modsecurity-crs. Here's a typical example: Mr Naughty is trying to hack example.com, a website running a vulnerable installation of WordPress on a LAMP server. Mr Naughty is trying to use an SQL injection attack to create a new admin user in the database so that he can deface the site, steal data etc. However, ModSecurity identifies the SQL injection attack contained in the POST variable sent by Mr Naughty and blocks it before it is executed by Wordpress. The attack fails :) Sounds great, right?

Why isn't it more widely deployed?

I started learning about ModSecurity after a friend recommended it to me, about a year and a half ago. As an enthusiastic but inexperienced amateur, I really struggled to configure it properly - each rule is using pattern matching to decide what to block, and there are inevitable false positives. This means you can't just install it and expect it to work, typically you run ModSecurity in "detection only" mode for a time (rules are evaluated but ModSecurity doesn't actually block anything), and then inspect the audit logs to identify where you need to make amendments to the rules to remove those false positives. The audit log is a text file with sections for each part of the transaction: the data sent to the server, the response sent back, and any rules that were matched. Since the data for each transaction is split over multiple lines, it does not lend itself to being sorted with simple utilities like grep. Identifying all of the requests from a certain IP address that triggered a given rule is a non-trivial exercise.

Initial Solutions

My first attempt at tackling the problem was to remove the rules that were being triggered at certain locations. To do this I wrote a BASH script. The script doesn't look at the audit log file, it just uses the error messages ModSecurity writes to the apache log, and spits out a virtualhost configuration file listing locations (URLs) where certain rules are disabled. This would work OK if you were running ModSecurity in "traditional" mode, where any rule that is matched results in the request being blocked, but it isn't good for the new anomaly scoring mode (the one that enumerates badness). In the anomaly scoring mode, each rule has a point score and the request is blocked if the score passes over a threshold... I soon realised that my script above was actually just removing the rule that adds up the scores and blocks the request, when it should have been removing the individual rules! This wasn't good enough. I realised I needed a more fine-tuned approach, so I learned some Perl. Perl can do multiline regex (slowly!), which enabled me to look at the audit log instead of the error log. The perl script I wrote splits the audit log into bits and puts it into a spreadsheet. This is the same fundamental approach as my C++ app, but the spreadsheet quickly becomes extremely sluggish, and the script takes ages to run. It does work, though!

The Solution: auditlog2db commandline utility

So, after my partial success with Perl I decided I needed something serious to tackle the problem. I had read that C++ apps are generally faster than scripting languages like Perl, and wanted to learn the language that most of the apps in the Plasma Desktop environment (KDE) are written in. I had an idea that a sqlite database would be a good way to store the information from the audit logs so that it could be sorted quickly, but I didn't know any C++ or anything about sqlite. I learned:

- Some basic C++ (hair-tearingly frustrating at times but ultimately rewarding)

- How to use the C/C++ sqlite API (reasonably well documented but very confusing to someone writing their first C++ app)

- How to do regular expression matching in C++ using the Boost Regex library (much more difficult than perl!)

- How to use a Makefile to make compilation less tedious.

The result is a C++ commandline utility called auditlog2db that will import the logfile into a sqlite3 database. It can process about 2000 transactions per second, which is about a bazillion times faster than the perl script :D As my code got more complicated, I realised I needed to use a proper version control system instead of just saving copies of files as foo.BAK, foo.BAK2 ... so I learned Git. Git is actually quite accessible and definitely worth learning.

Packaging

So, at this point my code was on Github and it worked, but I doubted very much whether anyone would find it and use it. Seriously... in 2015, you shouldn't have to compile a program yourself unless you're actually developing it. Packaging my code for Ubuntu/Debian turned out to be almost as difficult as writing the damn program! I started by learning the GNU build system, Autotools, to replace my handwritten Makefile with a more flexible one. Autotools is the group of programs that are used in the classic "configure, make, make install" procedure to check dependencies and create a makefile that installs everything to the correct place on your system and removes them cleanly again afterwards. Autotools turned out to be a nightmare. It is not at all easy to learn - something as simple as testing for C++11 support in the compiler and setting the appropriate flag should be easy, but it's not, and requires the use of some pretty archaic m4 macros. The documentation is sparse, and non-trivial example tutorials are hard to come by. In defence of Autotools, once it is set up, the "configure, make, install" procedure is easy to do - I can see why it was good in the days when end users were required to compile software. It also provides some nice features like "make dist", which creates a .tar.gz source archive for distribution - useful for starting a .deb! If I could start over, I think I would learn cmake, which is supposed to be easier. Once this was all sorted, I set to work building a .deb package. The Ubuntu documentation can pretty much be summed up in one sentence:

"Build a package as you would for debian, but use the ubuntu release codename (utopic) instead of the Debian codename (unstable) in the changelog file."

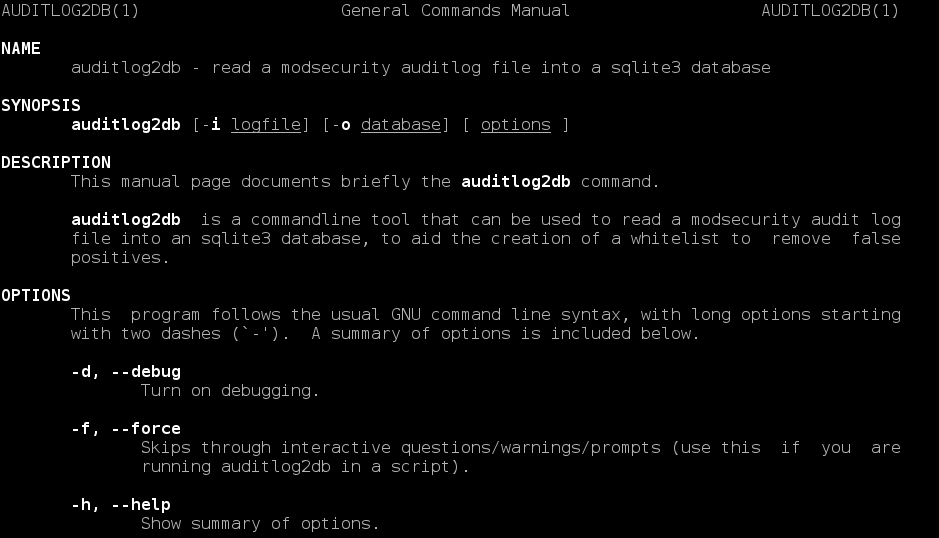

After battling my way through the Debian new maintainer's guide, I finally produced a package. However, the package checker lintian kicked up a load of errors. Some were trivial like line lengths in the package description, but others were more serious. A manual file was missing. Yet another unpleasant surprise: manual files are written using nroff, a markup language even more difficult to learn than TeX, and a lot less useful. Luckily, it was possible to just plagiarise borrow a lot of the markup from other manfiles, which are stored in /usr/share/man/man1/foo.1.gz. Take a look at a few using the zless command, and you'll see why I wasn't enthused at the prospect of writing one from scratch.

Distribution

Package completed, error free, it was time to upload to a PPA. The next surprise was that you can't just create a .deb, sign it, and upload it to a PPA. Launchpad builds the binaries itself from a source archive! This is a great for quality control, since the packages are built in a sanitised chroot. In fact, this caught a few of my errors, like missing libsqlite3-dev and libboost-regex-dev from the build-depends field in the control file. These libraries were (obviously) installed on my laptop already, but they weren't present in the chroot, so the compiler failed during linking. After a bit of trial and error, I got Launchpad to build my app successfully :) My PPA is here: https://launchpad.net/~sam-hobbs/+archive/ubuntu/ams-whitelisting-tools Packages are available for 14.04 Trusty Tahr and 14.10 Utopic Unicorn. If you fancy helping me out (I'd really appreciate it!), you can add the PPA, install the package, test it and remove it. The package is called ams-whitelisting-tools because I plan on adding other utilities to the package later. Obligatory warning: in general, you shouldn't add random PPAs to your system. Only do this if you trust me! The following code will add the PPA, install my utility, do some basic tests, and then remove the package and the PPA.

sudo add-apt-repository ppa:sam-hobbs/ams-whitelisting-tools sudo apt-get update sudo apt-get install ams-whitelisting-tools man auditlog2db auditlog2db --version sudo apt-get remove --purge ams-whitelisting-tools sudo add-apt-repository --remove ppa:sam-hobbs/ams-whitelisting-tools

If you have modsecurity installed, you can also run this command to generate a database from your audit log file:

auditlog2db -i /var/log/apache2/modsec_audit.log -o ~/modsecurity.db

The utility is still very much in development, but it is at the stage now where it could be useful to people and I'm very pleased to be able to release something! If you do any serious testing, I'd love to hear some feedback. I'm aware that I need to tighten up the --force and --quiet options, which were added recently.

Comments

Modsecurity

Hi Sam,

> If you have read my previous posts on Apache2's security module "ModSecurity"

That is what I am looking for: a starter guide to installation and introduction to OWASP rules for Raspberry Pi apache2. But I cannot find it...

https://samhobbs.co.uk/2015/02/whitelisting-tools-apache-modsecurity is already several steps on from the starting point I am wanting...

This refers to: "My previous articles about ModSecurity have discussed some of the cruder methods of removing false positives. " Can you point me to these?

I have found this: https://www.digitalocean.com/community/tutorials/how-to-set-up-mod_secu… but not sure how

relevant it is...

Advice?

Thanks...John

I can write a tutorial if you like

Modsecurity

Hi Sam,

I would very much appreciate a tutorial on modsecurity - what to load; how to configure; where to find pre-requisites. It does not appear to be straight-forward so guidance from an expert is needed.

After fixing the https problem, I hunted around your web-site and somewhere (cannot remember where) I came across information on setting up virtual hosts, in particular, to deal with the case where a client uses an IP address and not a FQDN. I got this to work (I found that I had to use separate files in sites-available for each vhost). Then allowed my web-server to run outside my firewall and watched apache2/error.log for "client denied" attempts. As you mentioned in that piece, the entries are very interesting - robots.txt, webcalendar, testproxy.php,sitemap.xml...For the moment the https part of my webserver is behind a firewall.

Being able to improve security would be invaluable.

Thanks....John

Getting Started with Apache ModSecurity on Debian and Ubuntu

got the folowing message running the auditlog2db

Hello Sam

thanks for sharing your work with us , i worked today on setting a lab to test auditlog2db , everything went smooth , however i got the below message when run appliaction

Command : auditlog2db -i logs/modsec_audit.log -o database/modsecurity23.db

Result : data was imported in the database file , with the below message

******************************************************************************************************

VvJviwrRBDgAAOquv9gAAAAG: Error - 200002 could not be found in the rule ID map

VvJviwrRBDgAAOquv9gAAAAG: error binding values for 200002 . Code 21 description: not an error

VvJvjgrRBDgAAOquv9kAAAAG: Error - 200002 could not be found in the rule ID map

VvJvjgrRBDgAAOquv9kAAAAG: error binding values for 200002 . Code 21 description: not an error

VvJvpwrRBDgAAOsMiegAAAAJ: Error - 200002 could not be found in the rule ID map

VvJvpwrRBDgAAOsMiegAAAAJ: error binding values for 200002 . Code 21 description: not an error

VvJvqgrRBDgAAOvwXT0AAAAB: Error - 200002 could not be found in the rule ID map

VvJvqgrRBDgAAOvwXT0AAAAB: error binding values for 200002 . Code 21 description: not an error

VvJvqwrRBDgAAOvwXT4AAAAB: Error - 200002 could not be found in the rule ID map

******************************************************************************************************

Away from this message everything is going fine so far

Thanks and best regards

Thank you for the feedback

auditlog2dbisn't very good at the moment - I'm currently in the process of rewriting it using Qt types instead of the standard library, and this is one of the things I'm improving in the process. Hopefully I'll be able to add a viewer too (hence qt), to make it easier to construct useful queries for viewing the database. What I think happened is thatauditlog2dbtried to look up a value in a map, didn't find one, then tried to bind some invalid data (around line 1471 in logchop.cpp). Error 21 is SQLITE_MISUSE, which is probably because I tried to bind invalid data. I should have written the program to throw an error and stop/skip to the next record, but instead I printed an error and continued. Thank you for testing, I hope the database is more useful to you than the plain log file. Samquestion about modsecurityś script tool

Hi Sam,

How are you? The modsecurity is really exhausting my brain even through I am just a user. Really~Really~Really thanks your devoting in this field to share this articles. Here I refer to your whitelisting instruction. I decide this to reduce pain for using. But, I got a problem when I execute the commandlin.

sudo add-apt-repository ppa:sam-hobbs/ams-whitelisting-tools

Here is the prompts showing below.

You are about to add the following PPA to your system:

Whitelisting tools for Apache2's ModSecurity.

Provides a tool "auditlog2db" to import the ModSecurity audit log file into an sqlite3 database.

More info: https://launchpad.net/~sam-hobbs/+archive/ubuntu/ams-whitelisting-tools

Press [ENTER] to continue or ctrl-c to cancel adding it

Traceback (most recent call last):

File "/usr/bin/add-apt-repository", line 167, in

sp = SoftwareProperties(options=options)

File "/usr/lib/python3/dist-packages/softwareproperties/SoftwareProperties.py", line 105, in __init__

self.reload_sourceslist()

File "/usr/lib/python3/dist-packages/softwareproperties/SoftwareProperties.py", line 595, in reload_sourceslist

self.distro.get_sources(self.sourceslist)

File "/usr/lib/python3/dist-packages/aptsources/distro.py", line 89, in get_sources

(self.id, self.codename))

aptsources.distro.NoDistroTemplateException: Error: could not find a distribution template for Raspbian/jessie

When I switched on it from DetectionOnly, I got a problem I could not create a article on my Drupal. Then, I switch off. It works and I blank the articleś author as anonymous. The other question is about the script you write. There are files folder such as admin, comment, files, node, sites and etc.. Where is the specific location they exist in my pi?

SPECIAL_LOCATIONS="\

/authorize.php \

/admin/config$ \

/admin/config/content/mollom \

/admin/config/content/syntaxhighlighter \

/admin/config/people \

/admin/config/search \

/admin/config/system/actions \

/admin/content \

/admin/reports \

/admin/structure/menu \

/admin/structure/types \

/admin/modules \

/admin/appearance/settings \

/admin/people/permissions \

/comment/reply \

/comment/[0-9]+$ \

/comment/[0-9]+/edit \

/comment/[0-9]+/approve \

/file/ajax/field_image/und/0/ \

/index.php$ \

/node/[0-9]+/delete$ \

/node/[0-9]+/edit$ \

/node/add/article \

/sites/default/files/css/ \

/sites/default/files/js \

/token/tree \

/user/[0-9]+/edit$ \

.*.(png|jpg|JPG|gif|ico)$"

Otherwise, you mention the URL domain where they should be in the script. I want to know. My domain is without the alias name. Should I put my domain in the script and write a * with my domain? Here I am confused a little bit.

Above all, really thanks your dedication

Jeff

wouldn't recommend the script for anomaly scoring mode

Few questions about setting

Hi Sam,

Thanks your replay. Here I refer to your instruction. But, there are some question I meet and I want your feedback.

Firstly, about "Log all comments, not just ones with high anomaly scores" this section, where do I set for this section of syntax?

# log all comments to auditlog

SecRule REQUEST_METHOD "@streq post" \

"chain, \

id:'000081', \

phase:1, \

t:none, \

t:lowercase, \

t:normalisePath, \

msg:'All comments logged to auditlog'"

SecRule REQUEST_URI "@rx ^(/comment/reply(/\d+)*|/comment/\d+/edit)$" \

"setvar:'tx.msg=%{rule.msg}', \

nolog,auditlog

Secondly, I have installed Drupal and Squirrelmail. The Drupal where is in /usr/share/drupal7 with virtualhost I set. About your mention, is here to setup my Drupal?

"Put the rule engine in DetectionOnly mode for new apps or a specific path"

SecRule REQUEST_URI "@beginsWith /webapp" \

"id:'000080', \

phase:1, \

t:none, \

ctl:ruleEngine=DetectionOnly, \

nolog, \

pass"

Otherwise, do I need to set my Drupal's admin account on here? If there are not only one account, how to properly set them?

SecRule REQUEST_URI "@beginsWith /admin" \

"id:'000003', \

phase:1, \

t:none, \

ctl:RuleEngine=Off, \

nolog, \

pass"

Thirdly, about "Allow multiple URL encoding in comments" this section, I didn't setup my Drupal with a website yet. The "node" and "body" are the keywords, aren't them? If they are, how to setup?

SecRule REQUEST_URI "@beginsWith /node" \

"id:'000006', \

phase:2, \

t:none, \

ctl:ruleRemoveTargetById=950109;ARGS:body, \

nolog, \

pass"

Due to the virtualhost setting, how do I consider it to setup the modsecurity?

Jeff

Path vs URL

Add new comment